From Lars Syll Do you believe that 10 to 20% of the decline in crime in the 1990s was caused by an increase in abortions in the 1970s? Or that the murder rate would have increased by 250% since 1974 if the United States had not built so many new prisons? Did you believe predictions that the welfare reform of the 1990s would force 1,100,000 children into poverty? If you were misled by any of these studies, you may have fallen for a pernicious form of junk science: the use of mathematical modeling to evaluate the impact of social policies. These studies are superficially impressive. Produced by reputable social scientists from prestigious institutions, they are often published in peer reviewed scientific journals. They are filled with statistical calculations too complex for anyone but

Topics:

Lars Pålsson Syll considers the following as important: Uncategorized

This could be interesting, too:

tom writes The Ukraine war and Europe’s deepening march of folly

Stavros Mavroudeas writes CfP of Marxist Macroeconomic Modelling workgroup – 18th WAPE Forum, Istanbul August 6-8, 2025

Lars Pålsson Syll writes The pretence-of-knowledge syndrome

Dean Baker writes Crypto and Donald Trump’s strategic baseball card reserve

from Lars Syll

Do you believe that 10 to 20% of the decline in crime in the 1990s was caused by an increase in abortions in the 1970s? Or that the murder rate would have increased by 250% since 1974 if the United States had not built so many new prisons? Did you believe predictions that the welfare reform of the 1990s would force 1,100,000 children into poverty?

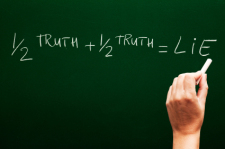

If you were misled by any of these studies, you may have fallen for a pernicious form of junk science: the use of mathematical modeling to evaluate the impact of social policies. These studies are superficially impressive. Produced by reputable social scientists from prestigious institutions, they are often published in peer reviewed scientific journals. They are filled with statistical calculations too complex for anyone but another specialist to untangle. They give precise numerical “facts” that are often quoted in policy debates. But these “facts” turn out to be will o’ the wisps …

These predictions are based on a statistical technique called multiple regression that uses correlational analysis to make causal arguments … The problem with this, as anyone who has studied statistics knows, is that correlation is not causation. A correlation between two variables may be “spurious” if it is caused by some third variable. Multiple regression researchers try to overcome the spuriousness problem by including all the variables in analysis. The data available for this purpose simply is not up to this task, however, and the studies have consistently failed.

Mainstream economists often hold the view that if you are critical of econometrics it can only be because you are a sadly misinformed and misguided person who dislike and do not understand much of it.

As Goertzel’s eminent article shows, this is, however, nothing but a gross misapprehension.

And just as Goertzel, Keynes certainly did not misunderstand the crucial issues at stake in his critique of econometrics. Quite the contrary. He knew them all too well — and was not satisfied with the validity and philosophical underpinnings of the assumptions made for applying its methods.

Keynes’ critique is still valid and unanswered in the sense that the problems he pointed at are still with us today and ‘unsolved.’ Ignoring them — the most common practice among applied econometricians — is not to solve them.

Keynes’ critique is still valid and unanswered in the sense that the problems he pointed at are still with us today and ‘unsolved.’ Ignoring them — the most common practice among applied econometricians — is not to solve them.

To apply statistical and mathematical methods to the real-world economy, the econometrician has to make some quite strong assumptions. In a review of Tinbergen’s econometric work — published in The Economic Journal in 1939 — Keynes gave a comprehensive critique of Tinbergen’s work, focusing on the limiting and unreal character of the assumptions that econometric analyses build on:

Completeness: Where Tinbergen attempts to specify and quantify which different factors influence the business cycle, Keynes maintains there has to be a complete list of all the relevant factors to avoid misspecification and spurious causal claims. Usually, this problem is ‘solved’ by econometricians assuming that they somehow have a ‘correct’ model specification. Keynes is, to put it mildly, unconvinced:

It will be remembered that the seventy translators of the Septuagint were shut up in seventy separate rooms with the Hebrew text and brought out with them, when they emerged, seventy identical translations. Would the same miracle be vouchsafed if seventy multiple correlators were shut up with the same statistical material? And anyhow, I suppose, if each had a different economist perched on his a priori, that would make a difference to the outcome.

Homogeneity: To make inductive inferences possible — and being able to apply econometrics — the system we try to analyse has to have a large degree of ‘homogeneity.’ According to Keynes most social and economic systems — especially from the perspective of real historical time — lack that ‘homogeneity.’ As he had argued already in Treatise on Probability, it wasn’t always possible to take repeated samples from a fixed population when we were analysing real-world economies. In many cases, there simply are no reasons at all to assume the samples to be homogenous. Lack of ‘homogeneity’ makes the principle of ‘limited independent variety’ non-applicable, and hence makes inductive inferences, strictly seen, impossible since one of its fundamental logical premises are not satisfied. Without “much repetition and uniformity in our experience” there is no justification for placing “great confidence” in our inductions.

And then, of course, there is also the ‘reverse’ variability problem of non-excitation: factors that do not change significantly during the period analysed, can still very well be extremely important causal factors.

Stability: Tinbergen assumes there is a stable spatio-temporal relationship between the variables his econometric models analyze. But as Keynes had argued already in his Treatise on Probability it was not really possible to make inductive generalisations based on correlations in one sample. As later studies of ‘regime shifts’ and ‘structural breaks’ have shown us, it is exceedingly difficult to find and establish the existence of stable econometric parameters for anything but rather short time series.

Measurability: Tinbergen’s model assumes that all relevant factors are measurable. Keynes questions if it is possible to adequately quantify and measure things like expectations and political and psychological factors. And more than anything, he questioned — both on epistemological and ontological grounds — that it was always and everywhere possible to measure real-world uncertainty with the help of probabilistic risk measures. Thinking otherwise can, as Keynes wrote, “only lead to error and delusion.”

Independence: Tinbergen assumes that the variables he treats are independent (still a standard assumption in econometrics). Keynes argues that in such a complex, organic and evolutionary system as an economy, independence is a deeply unrealistic assumption to make. Building econometric models from that kind of simplistic and unrealistic assumptions risk producing nothing but spurious correlations and causalities. Real-world economies are organic systems for which the statistical methods used in econometrics are ill-suited, or even, strictly seen, inapplicable. Mechanical probabilistic models have little leverage when applied to non-atomic evolving organic systems — such as economies.

It is a great fault of symbolic pseudo-mathematical methods of formalising a system of economic analysis … that they expressly assume strict independence between the factors involved and lose all their cogency and authority if this hypothesis is disallowed; whereas, in ordinary discourse, where we are not blindly manipulating but know all the time what we are doing and what the words mean, we can keep “at the back of our heads” the necessary reserves and qualifications and the adjustments which we shall have to make later on, in a way in which we cannot keep complicated partial differentials “at the back” of several pages of algebra which assume that they all vanish.

Building econometric models can’t be a goal in itself. Good econometric models are means that make it possible for us to infer things about the real-world systems they ‘represent.’ If we can’t show that the mechanisms or causes that we isolate and handle in our econometric models are ‘exportable’ to the real world, they are of limited value to our understanding, explanations or predictions of real-world economic systems.

The kind of fundamental assumption about the character of material laws, on which scientists appear commonly to act, seems to me to be much less simple than the bare principle of uniformity. They appear to assume something much more like what mathematicians call the principle of the superposition of small effects, or, as I prefer to call it, in this connection, the atomic character of natural law.

The system of the material universe must consist, if this kind of assumption is warranted, of bodies which we may term (without any implication as to their size being conveyed thereby) legal atoms, such that each of them exercises its own separate, independent, and invariable effect, a change of the total state being compounded of a number of separate changes each of which is solely due to a separate portion of the preceding state …

Yet if different wholes were subject to laws qua wholes and not simply on account of and in proportion to the differences of their parts, knowledge of a part could not lead, it would seem, even to presumptive or probable knowledge as to its association with other parts.

Linearity: To make his models tractable, Tinbergen assumes the relationships between the variables he study to be linear. This is still standard procedure today, but as Keynes writes:

It is a very drastic and usually improbable postulate to suppose that all economic forces are of this character, producing independent changes in the phenomenon under investigation which are directly proportional to the changes in themselves; indeed, it is ridiculous.

To Keynes, it was a ‘fallacy of reification’ to assume that all quantities are additive (an assumption closely linked to independence and linearity).

The unpopularity of the principle of organic unities shows very clearly how great is the danger of the assumption of unproved additive formulas. The fallacy, of which ignorance of organic unity is a particular instance, may perhaps be mathematically represented thus: suppose f(x) is the goodness of x and f(y) is the goodness of y. It is then assumed that the goodness of x and y together is f(x) + f(y) when it is clearly f(x + y) and only in special cases will it be true that f(x + y) = f(x) + f(y). It is plain that it is never legitimate to assume this property in the case of any given function without proof.

J. M. Keynes “Ethics in Relation to Conduct” (1903)

And as even one of the founding fathers of modern econometrics — Trygve Haavelmo — wrote:

What is the use of testing, say, the significance of regression coefficients, when maybe, the whole assumption of the linear regression equation is wrong?

Real-world social systems are usually not governed by stable causal mechanisms or capacities. The kinds of ‘laws’ and relations that econometrics has established, are laws and relations about entities in models that presuppose causal mechanisms and variables — and the relationship between them — being linear, additive, homogenous, stable, invariant and atomistic. But — when causal mechanisms operate in the real world they only do it in ever-changing and unstable combinations where the whole is more than a mechanical sum of parts. Since statisticians and econometricians — as far as I can see — haven’t been able to convincingly warrant their assumptions of homogeneity, stability, invariance, independence, additivity as being ontologically isomorphic to real-world economic systems, Keynes’ critique is still valid. As long as — as Keynes writes in a letter to Frisch in 1935 — “nothing emerges at the end which has not been introduced expressively or tacitly at the beginning,” I remain doubtful of the scientific aspirations of econometrics.

In his critique of Tinbergen, Keynes points us to the fundamental logical, epistemological and ontological problems of applying statistical methods to a basically unpredictable, uncertain, complex, unstable, interdependent, and ever-changing social reality. Methods designed to analyse repeated sampling in controlled experiments under fixed conditions are not easily extended to an organic and non-atomistic world where time and history play decisive roles.

Econometric modelling should never be a substitute for thinking. From that perspective, it is really depressing to see how much of Keynes’ critique of the pioneering econometrics in the 1930s-1940s is still relevant today. And that is also a reason why we — as does Goertzl — have to keep on criticizing it.

The general line you take is interesting and useful. It is, of course, not exactly comparable with mine. I was raising the logical difficulties. You say in effect that, if one was to take these seriously, one would give up the ghost in the first lap, but that the method, used judiciously as an aid to more theoretical enquiries and as a means of suggesting possibilities and probabilities rather than anything else, taken with enough grains of salt and applied with superlative common sense, won’t do much harm. I should quite agree with that. That is how the method ought to be used.

Keynes, letter to E.J. Broster, December 19, 1939