From Lars Syll We recommend dropping the NHST [null hypothesis significance testing] paradigm — and the p-value thresholds associated with it — as the default statistical paradigm for research, publication, and discovery in the biomedical and social sciences. Specifically, rather than allowing statistical signicance as determined by p < 0.05 (or some other statistical threshold) to serve as a lexicographic decision rule in scientic publication and statistical decision making more broadly as per the status quo, we propose that the p-value be demoted from its threshold screening role and instead, treated continuously, be considered along with the neglected factors [such factors as prior and related evidence, plausibility of mechanism, study design and data quality, real world costs and

Topics:

Lars Pålsson Syll considers the following as important: Uncategorized

This could be interesting, too:

tom writes The Ukraine war and Europe’s deepening march of folly

Stavros Mavroudeas writes CfP of Marxist Macroeconomic Modelling workgroup – 18th WAPE Forum, Istanbul August 6-8, 2025

Lars Pålsson Syll writes The pretence-of-knowledge syndrome

Dean Baker writes Crypto and Donald Trump’s strategic baseball card reserve

from Lars Syll

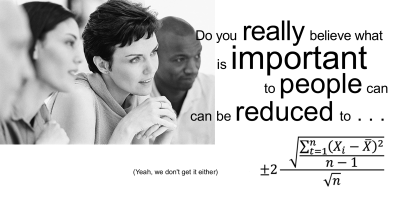

We recommend dropping the NHST [null hypothesis significance testing] paradigm — and the p-value thresholds associated with it — as the default statistical paradigm for research, publication, and discovery in the biomedical and social sciences. Specifically, rather than allowing statistical signicance as determined by p < 0.05 (or some other statistical threshold) to serve as a lexicographic decision rule in scientic publication and statistical decision making more broadly as per the status quo, we propose that the p-value be demoted from its threshold screening role and instead, treated continuously, be considered along with the neglected factors [such factors as prior and related evidence, plausibility of mechanism, study design and data quality, real world costs and benefits, novelty of finding, and other factors that vary by research domain] as just one among many pieces of evidence.

We make this recommendation for three broad reasons. First, in the biomedical and social sciences, the sharp point null hypothesis of zero effect and zero systematic error used in the overwhelming majority of applications is generally not of interest because it is generally implausible. Second, the standard use of NHST — to take the rejection of this straw man sharp point null hypothesis as positive or even definitive evidence in favor of some preferredalternative hypothesis — is a logical fallacy that routinely results in erroneous scientic reasoning even by experienced scientists and statisticians. Third, p-value and other statistical thresholds encourage researchers to study and report single comparisons rather than focusing on the totality of their data and results.

As shown over and over again when significance tests are applied, people have a tendency to read ‘not disconfirmed’ as ‘probably confirmed.’ Standard scientific methodology tells us that when there is only say a 10 % probability that pure sampling error could account for the observed difference between the data and the null hypothesis, it would be more ‘reasonable’ to conclude that we have a case of disconfirmation. Especially if we perform many independent tests of our hypothesis and they all give about the same 10 % result as our reported one, I guess most researchers would count the hypothesis as even more disconfirmed.

We should never forget that the underlying parameters we use when performing significance tests are model constructions. Our p-values mean nothing if the model is wrong. And most importantly — statistical significance tests DO NOT validate models!

In journal articles a typical regression equation will have an intercept and several explanatory variables. The regression output will usually include an F-test, with p – 1 degrees of freedom in the numerator and n – p in the denominator. The null hypothesis will not be stated. The missing null hypothesis is that all the coefficients vanish, except the intercept.

If F is significant, that is often thought to validate the model. Mistake. The F-test takes the model as given. Significance only means this: if the model is right and the coefficients are 0, it is very unlikely to get such a big F-statistic. Logically, there are three possibilities on the table:

i) An unlikely event occurred.

ii) Or the model is right and some of the coefficients differ from 0.

iii) Or the model is wrong.

So?