Why statistical significance is worthless in science There are at least around 20 or so common misunderstandings and abuses of p-values and NHST [Null Hypothesis Significance Testing]. Most of them are related to the definition of p-value … Other misunderstandings are about the implications of statistical significance. Statistical significance does not mean substantive significance: just because an observation (or a more extreme observation) was unlikely had there been no differences in the population does not mean that the observed differences are large enough to be of practical relevance. At high enough sample sizes, any difference will be statistically significant regardless of effect size. Statistical non-significance does not entail equivalence: a

Topics:

Lars Pålsson Syll considers the following as important: Statistics & Econometrics

This could be interesting, too:

Lars Pålsson Syll writes Keynes’ critique of econometrics is still valid

Lars Pålsson Syll writes The history of random walks

Lars Pålsson Syll writes The history of econometrics

Lars Pålsson Syll writes What statistics teachers get wrong!

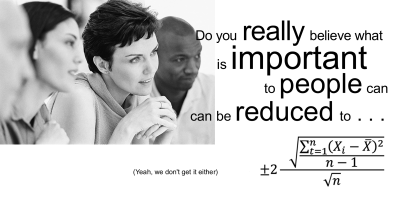

Why statistical significance is worthless in science

There are at least around 20 or so common misunderstandings and abuses of p-values and NHST [Null Hypothesis Significance Testing]. Most of them are related to the definition of p-value … Other misunderstandings are about the implications of statistical significance.

Statistical significance does not mean substantive significance: just because an observation (or a more extreme observation) was unlikely had there been no differences in the population does not mean that the observed differences are large enough to be of practical relevance. At high enough sample sizes, any difference will be statistically significant regardless of effect size.

Statistical non-significance does not entail equivalence: a failure to reject the null hypothesis is just that. It does not mean that the two groups are equivalent, since statistical non-significance can be due to low sample size.

Low p-value does not imply large effect sizes: because p-values depend on several other things besides effect size, such as sample size and spread.

It is not the probability of the null hypothesis: as we saw, it is the conditional probability of the data, or more extreme data, given the null hypothesis.

It is not the probability of the null hypothesis given the results: this is the fallacy of transposed conditionals as p-value is the other way around, the probability of at least as extreme data, given the null.

It is not the probability of falsely rejecting the null hypothesis: that would be alpha, not p.

It is not the probability that the results are a statistical fluke: since the test statistic is calculated under the assumption that all deviations from the null is due to chance. Thus, it cannot be used to estimate that probability of a statistical fluke since it is already assumed to be 100%.

Rejection null hypothesis is not confirmation of causal mechanism: you can imagine a great number of potential explanations for deviations from the null. Rejecting the null does not prove a specific one. See the above example with suicide rates.

NHST promotes arbitrary data dredging (“p-value fishing”): if you test your entire dataset and does not attain statistical significance, it is tempting to test a number of subgroups. Maybe the real effect occurs in me, women, old, young, whites, blacks, Hispanics, Asians, thin, obese etc.? More likely, you will get a number of spurious results that appear statistically significant but are really false positives. In the quest for statistical significance, this unethical behavior is common.

As shown over and over again when significance tests are applied, people have a tendency to read ‘not disconfirmed’ as ‘probably confirmed.’ Standard scientific methodology tells us that when there is only say a 10 % probability that pure sampling error could account for the observed difference between the data and the null hypothesis, it would be more ‘reasonable’ to conclude that we have a case of disconfirmation. Especially if we perform many independent tests of our hypothesis and they all give about the same 10 % result as our reported one, I guess most researchers would count the hypothesis as even more disconfirmed.

As shown over and over again when significance tests are applied, people have a tendency to read ‘not disconfirmed’ as ‘probably confirmed.’ Standard scientific methodology tells us that when there is only say a 10 % probability that pure sampling error could account for the observed difference between the data and the null hypothesis, it would be more ‘reasonable’ to conclude that we have a case of disconfirmation. Especially if we perform many independent tests of our hypothesis and they all give about the same 10 % result as our reported one, I guess most researchers would count the hypothesis as even more disconfirmed.

We should never forget that the underlying parameters we use when performing significance tests are model constructions. Our p-values mean nothing if the model is wrong. And most importantly — statistical significance tests DO NOT validate models! If you run a regression and get significant values (p < .05) on the coefficients, that only means that if the model is right and the values on the coefficients are null, it would be extremely unlikely to get those low p-values. But — one of the possible reasons for the result, a reason you can never dismiss, is that your model simply is wrong!

The present excessive reliance on significance testing in science is disturbing and should be fought. But it is also important to put significance testing abuse in perspective. The real problem in today’s social sciences is not significance testing per se. No, the real problem has to do with the unqualified and mechanistic application of statistical methods to real-world phenomena without often having even the slightest idea of how the assumptions behind the statistical models condition and severely limit the value of the inferences made.